System Risk Assessment

Impact Assessment and Risk Assessment are two critical evaluations for AI systems in healthcare.

Impact Assessment identifies who will be affected by the AI system - doctors, nurses, IT staff, patients, and others - and analyzes how the system’s design, implementation, and operation will change their workflows, responsibilities, and decision-making processes.

Risk Assessment systematically evaluates what could go wrong, identifying potential technical, clinical, operational, and regulatory risks, assessing their severity and likelihood, and determining who would be harmed and how severely.

In Pacific AI, Risk Assessment is an AI-assisted workflow that automatically analyzes uploaded system documentation (architecture, design, quality assurance, operational procedures, policies) to generate structured risk reports. These reports enable Risk Managers and Compliance Officers to understand the system’s safety profile, prioritize mitigation strategies, and make informed deployment decisions.

Both assessments incorporate role-based analysis - considering how risks manifest differently for various stakeholders - and support periodic reassessment throughout the system lifecycle to ensure evaluations remain current as the AI system evolves.

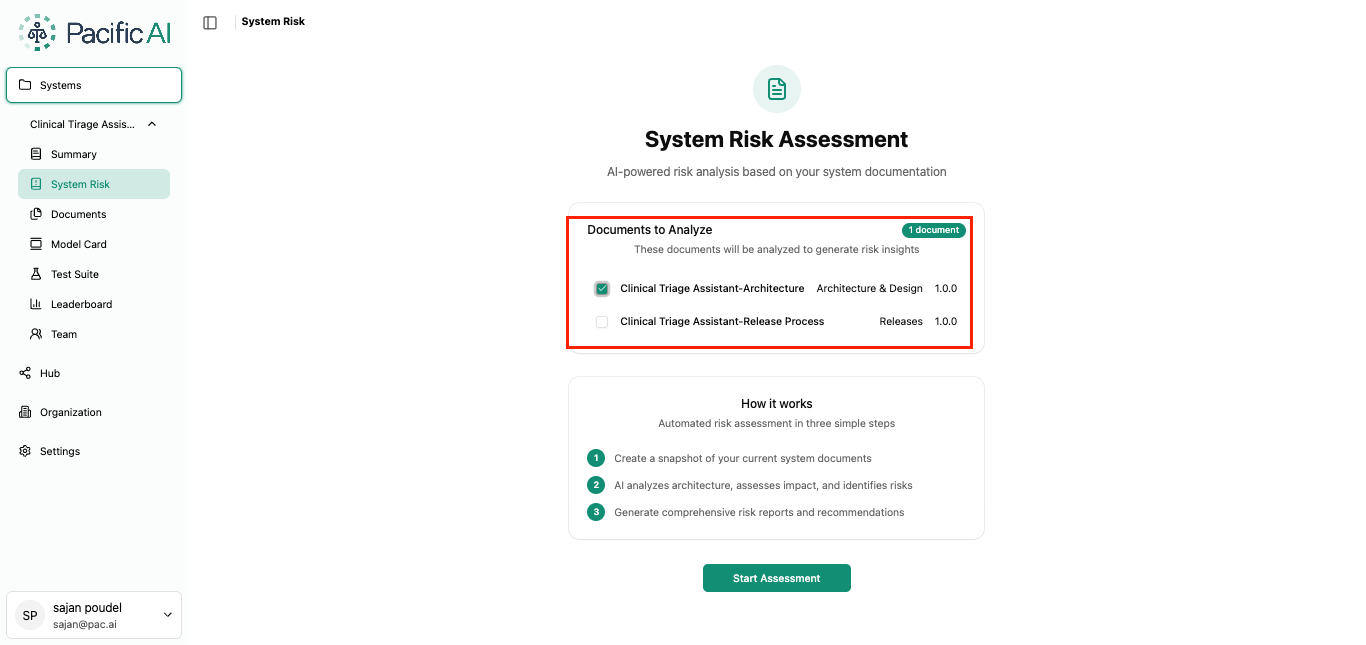

Initiate Risk Assessment

Section titled “Initiate Risk Assessment”Navigate to System Risk within a specific system to start the guided risk assessment process. The workflow walks users through document selection, architecture review, impact analysis, and risk identification.

Select Relevant Documents

Section titled “Select Relevant Documents”Select one or more Architecture and Design documents that will serve as the basis for analysis.

Click Start Assessment to initiate AI-powered risk evaluation using the selected documents.

Architecture Information

Section titled “Architecture Information”The system analyzes the selected documents and generates an Architecture Information snapshot.

The Risk Manager can:

- Review the generated summary

- Edit or refine architectural details

- Add missing information

- Remove irrelevant content

This validated architecture snapshot forms the foundation for further risk analysis.

Impact Assessment

Section titled “Impact Assessment”Assess how the system affects different stakeholder roles.

Default roles include Doctor, Nurse, Administrator, Technician, Patient, and Radiologist. Custom roles can also be added if required.

The system generates an AI-based impact summary for each selected role. The Risk Manager reviews and edits the assessment before proceeding.

Risk Registry

Section titled “Risk Registry”Based on the Architecture Information and Impact Assessment, the system proposes risks aligned with Responsible AI principles.

Risk categories include:

- Fairness

- Accountability

- Transparency and Explainability

- Reliability and Robustness

- Safety

- Privacy and Security

- Accessibility

Users may use Auto Discovery to generate risks across all categories, or Guided Definition to focus on specific categories. They can also accept, reject, or edit proposed risks, and attach supporting evidence or additional explanation.

Summary and Risk Level

Section titled “Summary and Risk Level”After completing all steps, the system generates a consolidated summary including:

- Architecture Information

- Impact Assessment

- Risk Registry

- Overall Risk Level

Reports can be reviewed online or downloaded for recordkeeping and audits.

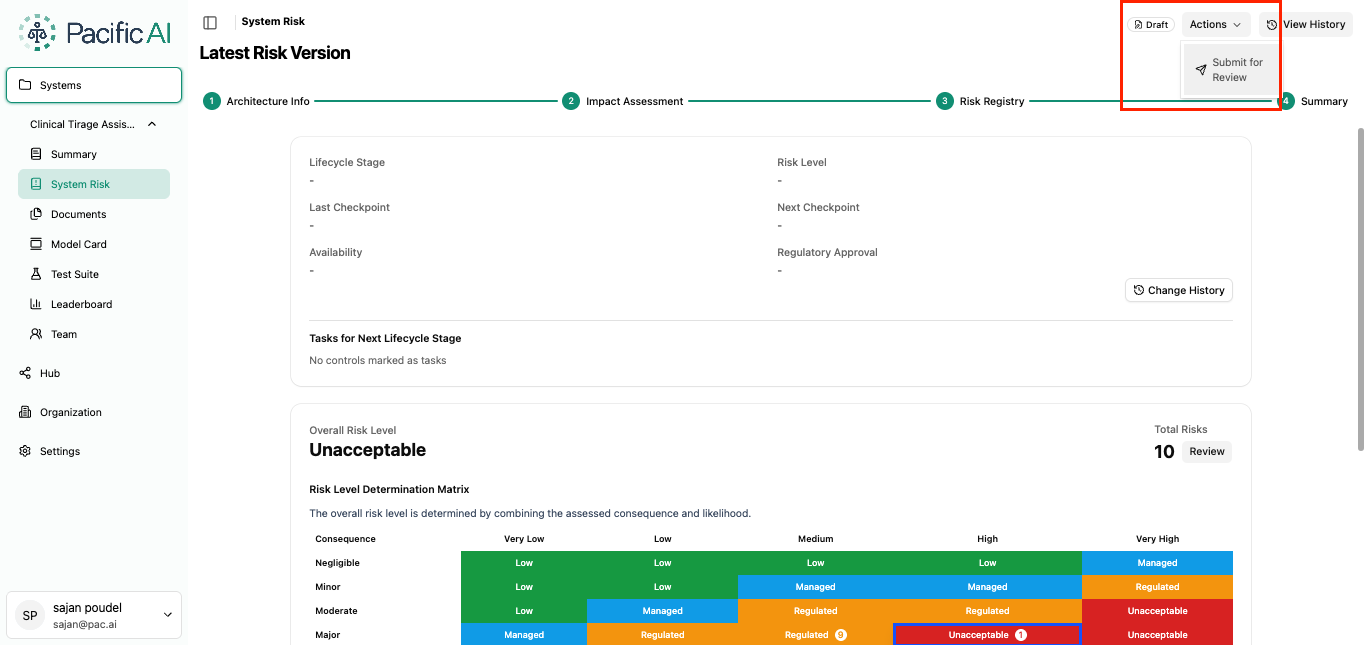

Submit for Review

Section titled “Submit for Review”Once finalized, the Risk Manager selects Submit for Review under the Actions button at the top of the page. The assessment status changes from Draft to In Review.

Compliance Officer Review

Section titled “Compliance Officer Review”The Compliance Officer may take one of the following actions:

Approve

Section titled “Approve”Approve the assessment and complete required governance fields:

- Lifecycle Stage

- Last Checkpoint

- Next Checkpoint

- Availability (Not Released, Internal Use, External Use, Global)

- Regulatory Approval (Not Required, Required, Pending, Acquired)

The system automatically reflects the calculated risk level.

Reject

Section titled “Reject”Reject the assessment and provide a reason or feedback for revision.

Return to Draft

Section titled “Return to Draft”Return the assessment to Draft status for updates. A comment is required to clarify the requested changes.

System Risk Assessment ensures structured documentation, clear accountability, and ongoing oversight of AI systems within the organization.